Non-Line-of-Sight Imaging

using Phasor Field Virtual Wave Optics

Abstract

Non-line-of-sight (NLOS) imaging allows to observe objects partially or fully occluded from direct view, by analyzing indirect diffuse reflections off a secondary relay surface. Despite its many potential applications, existing methods lack practical usability due to several shared limitations, including the assumption of single scattering only, lack of occlusions, and Lambertian reflectance. Line-of-sight (LOS) imaging systems, on the other hand, can address these and other imaging challenges despite relying on the mathematically simple processes of linear diffractive wave propagation. In this work we show that the NLOS imaging problem can also be formulated as a diffractive wave propagation problem. This allows to image NLOS scenes from raw time-of-flight data by applying the mathematical operators that model wave propagation inside a conventional line-of-sight imaging system. By doing this, we have developed a method that yields a new class of imaging algorithms mimicking the various capabilities of LOS cameras. To demonstrate our method, we derive three imaging algorithms, each with its own unique novel capabilities, modeled after three different LOS imaging systems. These algorithms rely on solving a wave diffraction integral, namely the Rayleigh-Sommerfeld Diffraction (RSD) integral. Fast solutions to RSD and its approximations are readily available, directly benefiting our method. We demonstrate, for the first time, NLOS imaging of complex scenes with strong multiple scattering and ambient light, arbitrary materials, large depth range, and occlusions. Our method handles these challenging cases without explicitly developing a light transport model. We believe that our approach will help unlock the potential of NLOS imaging, and the development of novel applications not restricted to laboratory conditions, as shown in our results.

|

Description

Results

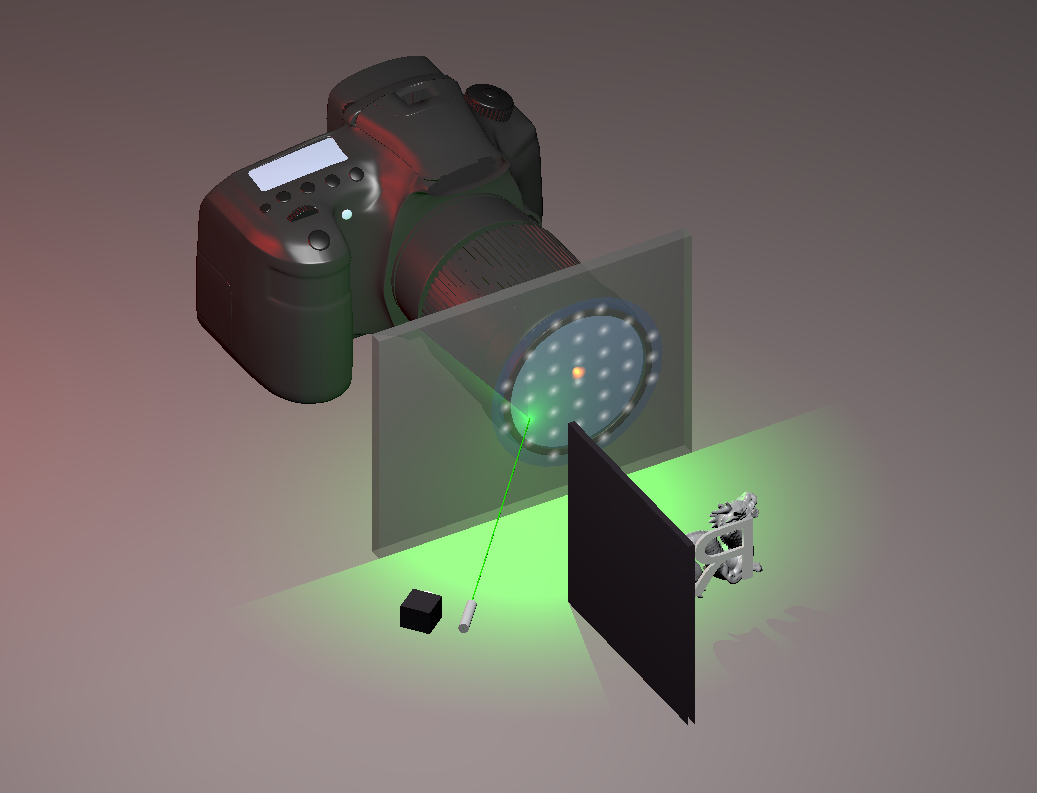

Framework of phasor field virtual camera. By using the phasor field method, we can use the classical diffraction theory to build a virtual camera around the corner. |

Measurement and phasor field on the scanning wall. The image on the left side shows the hardware scanning. The right image shows the virtual phasor field of the hidden scene (Global and Local display range for each frame). Notice that this raw virtual phasor field wavefront contains the direct and indirect signal of the hidden scene, interestingly we can also notice the shadow of the chair at around Time index:800. |

Virtual transient camera. Finally, the hidden image is decoded by using the diffraction theory. Moreover, we can build the virtual camera only see the direct signal in the hidden scene. |

Spatial-temporal hidden movie. Virtual phasor field camera reconstructs both direct and indirect wavefront in the hidden scene. |

Virtual regular camera using the inverse diffraction. We can also build the virtual regular camera by using the standard inverse diffraction. The image on the left shows the inverse diffraction by choosing Φ operator. Examples are shown on the right, refocusing results are calculated from the Rayleigh-Sommerfeld Diffraction (RSD) (top) and Fresnel Diffraction (bottoms). |

Hardware setup. We use the standard non-line-of-sight picosecond imaging system which consists of Picosecond laser, Single-photon avalanche diode (SPAD), Time-Correlated Single Photon Counting (TCSPC), Galvometer (for scanning), Stereo Camera (for calibration). |

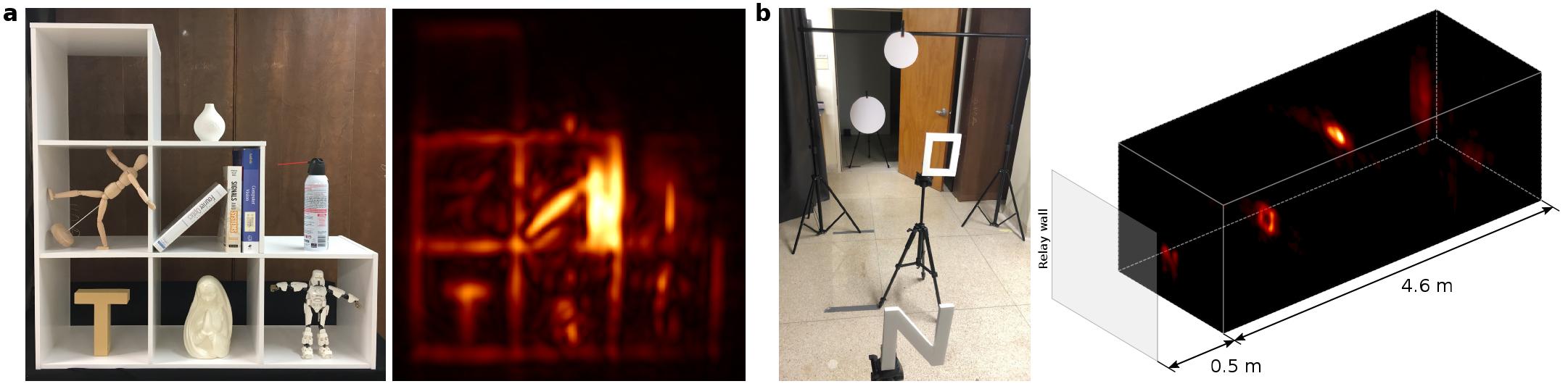

Reconstructions of a complex NLOS scene. a. Photograph of the scene as seen from the relay wall. The scene contains occluding geometries, multiple anisotropic surface reflectances, large depth, and strong ambient and multiply scattered light. b. 3D visualization of the reconstruction using phasor field (λ = 6 cm). We include the relay wall location and the coverage of the virtual aperture for illustration purposes. c. Frontal view of the scene, captured with an exposure time of 10 ms per laser position. d. Frontal view captured with just a 1 ms exposure time (24 seconds for the complete scan). |

Robustness of our technique. a. Reconstruction in the presence of strong ambient illumination (all the lights on during capture). b. Hidden scene with a large depth range, leading to very weak signals from objects farther away. |

FAQ

1. What is Non-Line-of-Sight Imaging?

Non-Line-of-Sight Imaging (NLOS) is a new imaging method that makes it possible to see around corners. Using fast lasers and detectors along with advanced algorithms, we can reconstruct images of scenes from indirect light after it has reflected off other surfaces in the scene. In other words: Our NLOS imaging system can project a virtual camera onto any surface. Our reconstruction shows us what this virtual projected camera sees. This makes it possible to see into rooms through windows, into caves, or around obstacles on the road.

|

2. What is the paper about?

Existing NLOS implementations rely on complex custom developed reconstruction methods and typically work on small scenes with little depth and simple materials. Often the reconstructions consist of single mostly flat objects surrounded by carefully prepared black materials. Every new scene feature to add, like larger scene size, imaging multiple objects at a time, imaging arbitrary materials, or imaging scenes with large depth, might therefore require a new scientific breakthrough.

In our work, we show that we can use the theory that describes imaging in the regular line of sight cameras (such as photography cameras or the human eye) and use it to reconstruct NLOS images. Line of sight cameras have no problem seeing complex scenes, and we have a sophisticated understanding of challenges and solutions for line of sight system. With our Phasor Field Virtual Wave method, we now have the same capabilities for NLOS cameras.

|

3. What applications will this new method enable?

In the paper, we show several examples of the capabilities of virtual wave cameras. We reconstruct scenes that are larger and more complex than what has previously been demonstrated. In particular, our reconstructed scenes have much larger depths of up to 5 meters. We also show that our reconstructions work with very noisy data resulting from short exposure times or ambient light. In addition, we show that our virtual cameras can easily do things beyond volume reconstruction. For example, we can reconstruct video of a light pulse moving through the hidden scene showing how light bounces back and forth between different scene objects.

|

News Press

Acknowledgements

This work was funded by DARPA through the DARPA REVEAL project (HR0011-16-C-0025), the NASA Innovative Advanced Concepts (NIAC) program (NNX15AQ29G), the Air Force Office of Scientific Research (AFOSR) Young Investigator Program (FA9550-15-1-0208), the Office of Naval Research (ONR, N00014-15-1-2652), the European Research Council (ERC) under the EU’s Horizon 2020 research and innovation programme (project CHAMELEON, grant No 682080), the Spanish Ministerio de Econom´ıa y Competitividad (project TIN2016-78753-P), and the BBVA Foundation (Leonardo Grant for Researchers and Cultural Creators). We would like to acknowledge Jeremy Teichman for helpful insights and discussions in developing the phasor field model. We would also like to acknowledge Mauro Buttafava, Alberto Tosi, and Atul Ingle for help with the gated SPAD detector, and Belen Masia, Sandra Malpica and Miguel Galindo for careful reading of the manuscript.

|

Related Work

Non-Line-of-Sight Imaging:

Non-Line-of-Sight Imaging using Phasor Field Virtual Wave Optics, Nature. [Website]

Phasor Field Diffraction Based Reconstruction for Fast Non-Line-of-Sight Imaging Systems, Nature Communications. [Website]

The role of Wigner Distribution Function in Non-Line-of-Sight Imaging, ICCP. [Website]

Analysis of Feature Visibility in Non-Line-of-Sight Measurements, CVPR. [Website]

On the effect of BRDFs on Phasor Field NLOS imaging, ICASSP. [Website]

A dataset for benchmarking time-resolved non-line-of-sight imaging, SIGGRAPH. [Website]

Phasor field waves: A Huygens-like light transport model for non-line-of-sight imaging applications, Optics Express. [Paper]

Paraxial theory of phasor-field imaging, Optics Express. [Paper]

Phasor field waves: a mathematical treatment, Optics Express. [Paper]

Non-line-of-sight-imaging using dynamic relay surfaces, Optics Express. [Paper]

|